“Finite Field Arithmetic.” Chapter 14A: Barrett's Modular Reduction. (Part 1 of 2)

This article is part of a series of hands-on tutorials introducing FFA, or the Finite Field Arithmetic library. FFA differs from the typical "Open Sores" abomination, in that -- rather than trusting the author blindly with their lives -- prospective users are expected to read and fully understand every single line. In exactly the same manner that you would understand and pack your own parachute. The reader will assemble and test a working FFA with his own hands, and at the same time grasp the purpose of each moving part therein.

- Chapter 1: Genesis.

- Chapter 2: Logical and Bitwise Operations.

- Chapter 3: Shifts.

- Chapter 4: Interlude: FFACalc.

- Chapter 5: "Egyptological" Multiplication and Division.

- Chapter 6: "Geological" RSA.

- Chapter 7: "Turbo Egyptians."

- Chapter 8: Interlude: Randomism.

- Chapter 9: "Exodus from Egypt" with Comba's Algorithm.

- Chapter 10: Introducing Karatsuba's Multiplication.

- Chapter 11: Tuning and Unified API.

- Chapter 12A: Karatsuba Redux. (Part 1 of 2)

- Chapter 12B: Karatsuba Redux. (Part 2 of 2)

- Chapter 13: "Width-Measure" and "Quiet Shifts.

- Chapter 14A: Barrett's Modular Reduction. (Part 1 of 2)

You will need:

- A Keccak-based VTron (for this and all subsequent chapters.)

- All of the materials from Chapters 1 - 13.

- There is no vpatch in Chapter 14A.

On account of the substantial heft of this chapter, I have cut it into two parts, 14A and 14B; you are presently reading 14A, which consists strictly of the exposition of Barrett's Reduction algorithm and its proof of correctness. 14B will appear here in the course of the next several days; it contains the Ada realization of said algorithm, along with performance measurements.

Replies to the reader mail bag from Chapter 13 will also appear in 14B.

Recall that in Chapter 12B, we determined that Knuth's Divider accounted for most of the CPU cost of modular exponentiation in FFA.

Each invocation of our modular exponentiator on, e.g., a 4096-bit Base/Exponent/Modulus tuple, requires, in addition to other work, 8192 modular reductions using FZ_Mod; and in each of these, a 8192-bit quantity is divided by the 4096-bit modulus to calculate a remainder. This is not merely expensive, but, more importantly, on account of Knuth's Divider, it is cubically (i.e. O(N^3), where N is your FFA Bitness) expensive!

Fortunately, there are several published approaches to substantially lightening the cost of modular exponentiation, by deriving a "pre-computed" datum from a given modulus -- in such a way that the necessary series of repeated reductions modulo that modulus can be performed "on the cheap", using a handful of inexpensive (sub-quadratic) operations.

I presently know of two traditional algorithms of this kind which admit a constant-spacetime implementation.

The first is Montgomery's Reduction. It is quite efficient, but imposes painful constraints on the domains of the modulus and the dividend. Specifically, if an integer to be reduced is not coprime with the modulus, the output of the calculation will be rubbish. And testing for this condition in constant time is quite expensive-- and even if it were not, if the test were to reveal a prohibited input, one would be forced to fall back to a different modular reduction method with a different timing! This would destroy the constant time guarantee. Montgomery's Reduction is wildly popular in single-purpose RSAtrons, but is entirely unsuitable for use in a general-purpose arithmetizer like FFA. And therefore nothing more will be said of it in this series.

The second of these algorithms, and the subject of this chapter, is Barrett's Reduction. This method, if implemented correctly, will run in constant spacetime and produce correct modular reductions -- at the cost of a single double-bitness Knuth division per change of modulus, and a handful of relatively-inexpensive multiplications and subtractions per change of dividend.

Carrying out Barrett's Reduction in constant spacetime demands constant-spacetime "bitness measure" and shift operations, which were introduced in Chapter 13. (If you have not yet satisfied yourself that you understand chapters 1 - 13, don't even think about proceeding here! Go back and study!)

Implementing (and grasping) this algorithm is not difficult, but to make it suitable for use in cryptographic arithmetic, a proof of correctness of this particular implementation is absolutely necessary: "a sapper errs only once!" It is very easy to produce a subtly-broken implementation of Barrett's Reduction which yields rubbish on 0.01% (or less) of the input domain, while giving perfectly-correct outputs on the rest!

Therefore we must have the proof. And the reader who is contemplating any serious use for FFA must not only read and understand this proof, but verify that the offered implementation actually corresponds to the algorithm given in said proof. The same thing has already been said about FFA as a whole. But it applies especially acutely to this chapter! You will not find this proof in Knuth's AOP, or even in Barrett's original 1986 paper. As far as I know, the proof in this article is the only public one which completely treats a constant-time implementation of Barrett's Reduction.

And if you, dear reader, after working carefully through this chapter and the next, have any doubt at all with respect to the correctness of the proof, or of the actual program's conforming to the proof, do not use the program! And please leave a comment!

Having said all of this, let's proceed with the algorithm and its correctness proof. The Ada implementation will appear in the next chapter, 14B.

Observe that for integers X ≥ 0, M > 0, X mod M = X - M⌊X / M⌋. Therefore, if we can compute ⌊X / M⌋, we can determine X mod M.

Barrett gave the following equivalence for X / M:

| X | 2k | X | 1 | |||

| — | = | —— | × | —— | × | ————— |

| M | M | 2j | 2k - j |

... where k and j are integers, the choice of values for which will depend on the concrete implementation, and will be discussed in detail further below.

Barrett's clever pill consists of the following transformation of the equivalence into pure-integer arithmetic:

| X | ( | ⌊ | 2k | ⌋ | 2k mod M | ) | ( | ⌊ | X | ⌋ | X mod 2j | ) | 1 | |||||

| — | = | —— | + | ———————— | × | —— | + | ———————— | × | ————— | ||||||||

| M | M | M | 2j | 2j | 2k - j |

For clarity, let's bring out the remainder subterms:

| R1 | = | 2k mod M |

| R2 | = | X mod 2j |

...then, the following equation will hold true:

| X | ⌊2k / M⌋⌊X / 2j⌋ | R2⌊2k / M⌋ | R1⌊X / 2j⌋ | R1R2 | ||||

| — | = | ——————————————— | + | —————————— | + | —————————— | + | ————— |

| M | 2k - j | 2k | M2k - j | M2k |

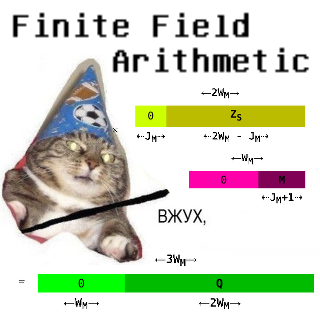

Marked in green is Barrett's approximation of the division X / M. This approximation is inexact, i.e. it will differ from the true value of X / M by some finite quantity, equal to the sum of the three terms of the error equation, marked in yellow. If we can put a bound on this quantity, it will be possible to write a constant-time modular reducer.

The quantity ⌊2k / M⌋ is to be computed only once, per change of the modulus M. We will refer to it later as the "Barrettoid". This is the item we will want to pre-compute at the beginning of a modular exponentiation, and use for all of the required modular reductions afterwards.

The modular reduction of a particular X by modulus M, if the Barrettoid corresponding to M is available, requires no Knuth division, but can instead be performed using a series of relatively-inexpensive shifts, multiplications, additions, and subtractions.

Now let's find that error bound, along with the domains of the parameters where it will apply.

Our approach to this will be: if each of the error terms can be guaranteed to be less than 1, the sum of all three terms can be guaranteed to be less than 3, and then a Barrett's Reduction can be performed in constant-time.

Let's start with the first error term:

| R2⌊2k / M⌋ | (X mod 2j)⌊2k / M⌋ | |||

| —————————— | = | ————————————————————— | < | 1 |

| 2k | 2k |

In order for this inequality to hold true, the denominator must exceed the numerator:

(X mod 2j)⌊2k / M⌋ < 2k

It is clear that the left-hand side of the inequality, when variables other than X are held constant, reaches its maximum when X ≡ 2j - 1, while the right-hand side does not depend on X. Therefore, we can rewrite it as:

(2j - 1)⌊2k / M⌋ < 2k

Observe that ⌊2k / M⌋ × M ≤ 2k. It is then known that:

(2j - 1)⌊2k / M⌋M < M2k,

(2j - 1)2k < M2k,

2j - 1 < M,

2j ≤ M

must be true for the inequality to hold.

Now let's take the same approach with the second error term:

| R1⌊X / 2j⌋ | (2k mod M)⌊X / 2j⌋ | |||

| —————————— | = | ——————————————————— | < | 1 |

| M2k - j | M2k - j |

In order for this inequality to hold true, the denominator must exceed the numerator:

(2k mod M)⌊X / 2j⌋ < M2k - j

It is clear that the left-hand side of the inequality, when variables other than M are held constant, reaches its maximum when 2k mod M = M - 1. Therefore, we can rewrite it as:

(M - 1)⌊X / 2j⌋ < M2k - j

Observe that ⌊X / 2j⌋ × 2j ≤ X. It is then known that:

(M - 1)⌊X / 2j⌋2j < M2k - j2j,

(M - 1)X < M2k,

... which will hold true for all possible M if and only if:

X ≤ 2k.

Finally, let's apply this approach to the third error term:

| R1R2 | (2k mod M)(X mod 2j) | |||

| ——————— | = | ——————————————————— | < | 1 |

| M2k | M2k |

In order for this inequality to hold true, the denominator must exceed the numerator:

(2k mod M)(X mod 2j) < M2k

It is clear that the left-hand side of the inequality, when variables other than M and X are held constant, reaches its maximum when 2k mod M = M - 1 and X ≡ 2j - 1. Therefore, we can rewrite it as:

(M - 1)(2j - 1) < M2k

... which will hold true for all possible M if and only if:

2j - 1 < 2k,

2j ≤ 2k,

j ≤ k.

And so, we now know that if we satisfy the constraints:

2j ≤ M

X ≤ 2k

j ≤ k

... the error terms will obey the equation:

| R2⌊2k / M⌋ | R1⌊X / 2j⌋ | R1R2 | ||||||

| 0 | ≤ | —————————— | + | —————————— | + | ————— | < | 3 |

| 2k | M2k - j | M2k |

And from this fact, it follows that:

| ⌊ | X | ⌋ | ⌊2k / M⌋⌊X / 2j⌋ | |||

| — | = | ——————————————— | + | E ∈ {0, 1, 2} | ||

| M | 2k - j |

It then is clear that:

| ⌊2k / M⌋⌊X / 2j⌋ | ||||||||

| X mod M | = | X | - | M | × | ——————————————— | - | E × M |

| 2k - j |

That is, to compute the modular reduction of X modulo M, we calculate Barrett's approximation, multiply the result by M, subtract this quantity from X, and then finally must subtract M zero, once, or twice, i.e. until the working register is less than M and thereby contains the reduced X.

The algorithm of our Barrettron will then take this form:

Algorithm 1:

To compute the reduction R := X mod M:

- Xs := X >> j

- Z := Xs × ⌊2k / M⌋

- Zs := Z >> k - j

- Q := Zs × M

- R := X - Q

- If R ≥ M:

R := R - M - If R ≥ M:

R := R - M - R is now equal to X mod M.

A sharp reader will have noticed that Algorithm 1 is not directly usable under the constraints of FFA, where conditional branching on operand bits is forbidden, and all registers subject to multiplication or user I/O must have powers-of-two bitnesses. Steps 6 and 7 violate the former constraint; the potentially 2k + 1 bitness of the Barrettoid ⌊2k / M⌋ (if M were to equal 1) violates the latter.

And so, let's re-write it to obey these restrictions, and at the same time incorporate concrete values for the parameters k and j to make for correctly-FFA-sized input, output, and intermediate result registers; we will also split the algorithm into separate pre-computation and reduction phases.

Algorithm 2:

For each new modulus M, we will precompute the constants that correspond to it:

- WM := FZ_Bitness(M)

This is simply the register bitness of the modulus M, and, in ordinary circumstances, will correspond to the currently-set FFAcalc bitness.

0 < M < 2WM is then the domain of M. - KM := 2 × WM

This corresponds to the parameter k in the proof; and it also equals the register bitness available for any X that will be reduced, since we will be using the Barrettron only inside modular exponentiation, where any given X will be the product of two multiplicands each having the same bitness as M.

0 ≤ X < 2KM is then the domain of X. - BM := The lower KM bits of ⌊2KM / M⌋

This is the Barrettoid of the given M, constrained to a KM-bit register. If M ≠ 1, BM is valid; otherwise we have the degenerate case, and the output of the reduction calculation must be nulled (given as any integer reduced modulo 1 will equal zero.)

0 < BM < 2KM. - DM := Top bit of ⌊2KM / M⌋

If and only if equal to 1, indicates that the modulus M is the degenerate case where M = 1.

0 ≤ DM ≤ 1. - JM := Measure(M) - 1

This corresponds to the parameter j in the proof.

0 ≤ JM < WM and 2JM ≤ M < 2JM + 1. - SM := KM - JM

This corresponds to the expression k - j in the proof.

Observe that WM < SM ≤ KM.

For each new input X, to compute the reduction R := X mod M:

- Xs := X >> JM

- Z := Xs × BM

- Zs := Z >> SM

- Q := Zs × M

- R := X - Q

- R := R - M, C := Borrow

- R := R + (M × C)

- R := R - M, C := Borrow

- R := R + (M × C)

- R := R - (R × DM)

- R is now equal to X mod M.

The "reversion" steps in Algorithm 2 (7, 9, and 10) are described arithmetically for clarity, but we will want to carry them out using the much-faster FZ_Mux operator, rather than via arithmetic.

In Chapter 14B, we will walk through the Ada implementation of Algorithm 2, and measure the performance gained from rewriting our modular exponentiator to make use of Barrett's Reduction instead of the classical Knuthian reduction.

Stay tuned!

Here's a quick pari/gp script to play with the algo and check its correctness on some random inputs:

barret(x,m,j,k,dbg=0)={

if(2^j>m,print("Bad C1");return());

if(x>2^k,print("Bad C2");return());

if(j>k,print("Bad C3");return());

if(dbg,

print("x = ",x);

print("m = ",m);

print("j = ",j);

print("k = ",k);

);

my(t=x - m * floor((floor(2^k/m) * floor(x/2^j))/2^(k-j)));

for(i=1,2,if(t>=m,t-=m));

return(t);

};

test(N)={

for(i=1,N,

my(x=random(2^128));

my(M=random(2^64));

my(j=random(floor(log(M)/log(2))));

/* k is hardcoded to 128 */

my(b=barret(x,M,j,128));

if(b!=x%M,

print("Sad X = ",x,"; M = ",M);

print("Barret = ",b);

print("Mod = ",x%M)

);

);

};

Obviously this is in no way enough to forgo working out the proof.