“Finite Field Arithmetic.” Chapter 14A-Bis: Barrett's Modular Reduction. (Physical Bounds Proof.)

This article is part of a series of hands-on tutorials introducing FFA, or the Finite Field Arithmetic library. FFA differs from the typical "Open Sores" abomination, in that -- rather than trusting the author blindly with their lives -- prospective users are expected to read and fully understand every single line. In exactly the same manner that you would understand and pack your own parachute. The reader will assemble and test a working FFA with his own hands, and at the same time grasp the purpose of each moving part therein.

- Chapter 1: Genesis.

- Chapter 2: Logical and Bitwise Operations.

- Chapter 3: Shifts.

- Chapter 4: Interlude: FFACalc.

- Chapter 5: "Egyptological" Multiplication and Division.

- Chapter 6: "Geological" RSA.

- Chapter 7: "Turbo Egyptians."

- Chapter 8: Interlude: Randomism.

- Chapter 9: "Exodus from Egypt" with Comba's Algorithm.

- Chapter 10: Introducing Karatsuba's Multiplication.

- Chapter 11: Tuning and Unified API.

- Chapter 12A: Karatsuba Redux. (Part 1 of 2)

- Chapter 12B: Karatsuba Redux. (Part 2 of 2)

- Chapter 13: "Width-Measure" and "Quiet Shifts."

- Chapter 14A: Barrett's Modular Reduction. (Part 1 of 2)

- Chapter 14A-Bis: Barrett's Modular Reduction. (Physical Bounds Proof.)

You will need:

- All of the materials from Chapters 1 - 14A

- There is no vpatch in Chapter 14A-Bis.

Given the trickiness of Barrett's Reduction under the constraints of FFA, and the fact that we want to hard-guarantee, as adults do, the exact amount of physical space required to hold the intermediate results of the computation, it is necessary to extend the proof of Chapter 14A with a physical bounds proof -- before we can dare to look at the corresponding program code.

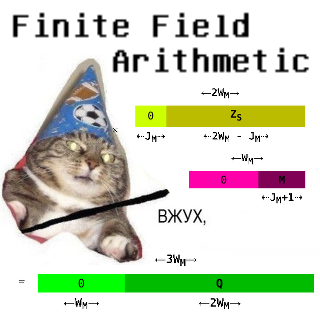

We will illuminate said proof with "electrical" illustrations, in the style of Chapter 12A.

Please print out and refer to Algorithm 2 from Chapter 14A when working through this Chapter.

First, three lemmas:

Lemma 1:

For any integers A ≥ 0, B > 0, C = ⌊A / B⌋, Measure(C) ≤ Measure(A) - Measure(B) + 1.

Lemma 2:

For any integers A ≥ 0, B ≥ 0, C = A × B, Measure(C) ≤ Measure(A) + Measure(B).

Lemma 3:

For any integers A ≥ 0, B ≥ 0, C = A + B, Measure(C) ≤ Max(Measure(A), Measure(B)) + 1.

If the correctness of these is not obvious, dear reader, stop reading and prove them. Right now.

We will use ⇠dashed arrows⇢ to refer to the variant bitness bounds of hypothetical zero and nonzero segments of registers, for the purpose of min/max width analysis of the intermediate results in our computation. ←Solid arrows→ will refer to invariant bounds (i.e. the physical sizes of particular registers.)

First, let's describe the physical space occupied by the quantities which must exist prior to performing Barrett's Reduction of a given X.

We begin with the modulus: 0 < M < 2WM. It lives in a WM-bit register:

| ←WM→ | |

| M |

Now let's picture, strictly for illustrative purposes, an M which is a bit less than "half-full":

| ←WM→ | ||

| 0 | M | |

| ⇠JM+1⇢ | ||

Recall that we picked the concrete value of JM (0 ≤ JM < WM) to equal one less than the measure of M, i.e.,

JM = Measure(M) - 1.

This is a valid choice under the inequalities yielded by the Ch. 14A proof.

Observe that this value is a secret (just like M and X) and therefore it is forbidden in FFA to branch on its bits, index memory by it, etc. The only mechanical thing we will do with it is to shift certain registers (via a constant-time method) by it.

Now, let's describe the Barrettoid BM corresponding to a given M.

By Lemma 1, the Measure of 0 < BM < 2KM will always be less than or equal to:

(2WM + 1) - Measure(M) + 1 = 2WM - JM.

And so, BM will look like:

| ←2WM→ | ||

| 0 | BM | |

| ⇠JM⇢ | ⇠2WM - JM⇢ | |

Now, of course, we also need the dividend:

0 ≤ X < 2KM is the integer to be reduced modulo M, and its register has twice the bitness of M:

| X | |

| ←2WM→ |

Now, let's describe the space required to carry out Barrett's Reduction itself, where in the end we obtain X mod M.

The first of the two shifts in the algorithm, where we obtain the term ⌊X / 2j⌋:

1: Xs = X >> JM

| ←2WM→ | ||

| 0 | XS | |

| ⇠JM⇢ | ⇠2WM - JM⇢ | |

The first of the two multiplications. This one is 2WM × 2WM-sized, and it will give us the intermediate result:

⌊X / 2j⌋⌊2k / M⌋.

The second multiplicand is our pre-computed Barrettoid for the current M. And so:

2: Z = Xs × BM

By Lemma 2:

| ←2WM→ | |||||

| 0 | XS | ||||

| ⇠JM⇢ | ⇠2WM - JM⇢ | ||||

| ←2WM→ | |||||

| × | 0 | BM | |||

| ⇠JM⇢ | ⇠2WM - JM⇢ | ||||

| ←4WM→ | |||||

| = | 0 | Z | |||

| ⇠2JM⇢ | ⇠4WM - 2JM⇢ | ||||

The second of the two shifts. This one gets us the entire Barrett approximation of the quotient, i.e. ⌊X / M⌋ + E :

| ⌊2k / M⌋⌊X / 2j⌋ |

| ——————————————— |

| 2k - j |

3: Zs := Z >> 2WM - JM

| ←4WM→ | ||

| 0 | ZS | |

| ⇠2WM + JM⇢ | ⇠2WM - JM⇢ | |

Observe that we do not need to spend the cost of shifting the whole of Z in order to obtain ZS, given as the shift will always be of at least WM+1-bits (since 0 ≤ JM < WM). Ergo, the lower WM bits of Z are always discarded in this process, and can be simply ignored:

| ←4WM→ | ||||

| 0 | Z | |||

| ⇠2JM⇢ | ⇠4WM - 2JM⇢ | |||

| ←4WM→ | ||||

| = | ZHi | ZMid | ZLo | |

| ←WM→ | ←2WM→ | ←WM→ | ||

... so it actually suffices to right-shift only the 3WM-bit segment consisting of ZHi and ZMid, by WM - JM bits, and afterwards to take the ZMid-wide lower segment of the output as the resulting ZS.

ZS always fits inside a 2WM-bit register:

| ←2WM→ | ||

| 0 | ZS | |

| ⇠JM⇢ | ⇠2WM - JM⇢ | |

Here, we have something a little more interesting. We have an asymmetric (2WM × WM-sized) multiplication, where we want to multiply ZS, the Barrett approximation of the quotient, by the modulus M:

4: Q := Zs × M

| ←2WM→ | |||||

| 0 | ZS | ||||

| ⇠JM⇢ | ⇠2WM - JM⇢ | ||||

| ←WM→ | |||||

| × | 0 | M | |||

| ⇠JM+1⇢ | |||||

|

|||||

| ←3WM→ | |||||

| = | 0 | Q | |||

| ←WM→ | ←2WM→ | ||||

By Lemma 2, a 2WM-bit × WM-bit product in the general case must occupy 3WM bits; while, looking closer, it would appear that, given as we have multiplied the (2WM - JM)-bit contents of ZS by the JM+1-bit contents of M, we could end up with a 2WM+1-bit result...

However, in Chapter 14A we proved that:

| ⌊2k / M⌋⌊X / 2j⌋ | ||||||||

| X mod M | = | X | - | M | × | ——————————————— | - | E × M |

| 2k - j |

And so we know that Q ≤ X, and therefore, regardless of the value of JM, the term Q will always fit inside 2WM bits.

| ←2WM→ | |

| Q | |

| ←2WM→ | |

| X |

If the "secret" of this "miracle" eludes you, please go back to Chapter 14A and walk the proof, until it fits-in-head.

Now, we find the initial estimate of X mod M:

5: R := X - Q

| ←2WM→ | |

| X | |

| ←2WM→ | |

| - | Q |

| ←2WM→ | |

| = | R |

But do we actually need to perform a 2WM-bit subtraction here? It turns out that we don't, because R is at most W+2-bit. We know this because we have proven that at most two subtractions of M -- a WM-bit quantity -- will be required after this step to produce the final, correct X mod M, a WM-bit quantity. Therefore, what we actually need to do instead of the above, is:

| ←2WM→ | ||

| Ignore | X | |

| ←WM - L→ | ←WM + L→ | |

| ←2WM→ | ||

| - | Ignore | Q |

| ←WM - L→ | ←WM + L→ | |

| = | R | |

| ←WM + L→ | ||

L here is the bitness of our machine Word.

And by Lemma 3, we know that each of the following two gated subtractions can remove a maximum of one bit from the length of R; and from the Chapter 14A proof, we know that the final result will be of the same maximal bitness (i.e. will fit in the same size of FFA register) as the modulus M.

Now, we will do the first of our two gated subtractions. We will subtract a WM + L zero-extended (on the senior end, by one machine word) M from R, to obtain a difference S1 and a "borrow", C1.

6: S1 := R - M, C1 := Borrow

| ←WM + L→ | |||

| R | |||

| ←WM + L→ | |||

| - | 0 | M | |

| ←L→ | ←WM→ | ||

| ←WM + L→ | |||

| = | S1 | C1 ← Borrow | |

Now, the "gating" of that subtraction:

7: R := {C1=0 → S1, C1=1 → R}

| ←WM + L→ | ←WM + L→ | ||

| S1 | ⇒Mux(C1)⇐ | R | |

| ↳C1=0⇘ | ⇓ | ⇙C1=1↲ | |

| R | |||

| ←WM + L→ |

That is, if the subtraction in step 6 did not underflow, as indicated by C1 = 0, then we know that R was greater than or equal to M prior to step 6, and we then keep S1 (the result of the subtraction) and assign it back to R. On the other hand, if it did underflow, as indicated by C1 = 1, then we discard S1, and R stays as it was. Note that this process is to be carried out in constant time, via FZ_Mux.

Now, the second of the two gated subtractions. Exactly like in Step 6:

8: S2 := R - M, C2 := Borrow

| ←WM + L→ | |||

| R | |||

| ←WM + L→ | |||

| - | 0 | M | |

| ←L→ | ←WM→ | ||

| ←WM + L→ | |||

| = | S2 | C2 ← Borrow | |

And, finally, the "gating" of the second (and last) subtraction from Step 8:

9: R := {C2=0 → S2, C2=1 → R}

| ←WM + L→ | ←WM + L→ | ||

| S2 | ⇒Mux(C2)⇐ | R | |

| ↳C2=0⇘ | ⇓ | ⇙C2=1↲ | |

| R | |||

| ←WM + L→ |

Observe that we are not yet finished with R. The final result R = X mod M could still be one of two possible things:

- If M ≠ 1, the final result will be found in the bottom WM bits of R.

- If M = 1, then we had the degenerate case, and everything we did in steps 1-9 was simply to occupy constant-time, and has no bearing on the answer to the calculation of X mod M; the final result in this case is to be zero.

Ergo, we need one last Mux:

10: RFinal := {DM=0 → R, DM=1 → 0}

Recall that:

DM = Top bit of ⌊2KM / M⌋

If and only if equal to 1, indicates that the modulus M is the degenerate case where M = 1.

First, observe that:

| ←WM + L→ | |||

| R | |||

| = | |||

| 0 | RF | ||

| ←L→ | ←WM→ | ||

The two gated subtractions will at this point have zeroed any of the L upper bits of R that may have been previously non-zero.

And so, all that remains to do now, is:

| ←WM→ | ←WM→ | ||

| RF | ⇒Mux(DM)⇐ | 0 | |

| ↳DM=0⇘ | ⇓ | ⇙DM=1↲ | |

| R = X mod M | |||

| ←WM→ |

...and that's it, we have R = X mod M, as was proven already in Chapter 14A.

In the next Chapter, 14B, we will finally walk through the Ada program which implements this Barrettron. Stay tuned!