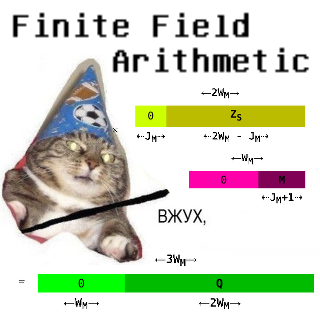

"Finite Field Arithmetic." Chapter 21A-Bis: Fix for Lethal Flaw in Ch.15's Greatest Common Divisor.

This article is part of a series of hands-on tutorials introducing FFA, or the Finite Field Arithmetic library. FFA differs from the typical "Open Sores" abomination, in that -- rather than trusting the author blindly with their lives -- prospective users are expected to read and fully understand every single line. In exactly the same manner that you would understand and pack your own parachute. The reader will assemble and test a working FFA with his own hands, and at the same time grasp the purpose of each moving part therein.

- Chapter 1: Genesis.

- Chapter 2: Logical and Bitwise Operations.

- Chapter 3: Shifts.

- Chapter 4: Interlude: FFACalc.

- Chapter 5: "Egyptological" Multiplication and Division.

- Chapter 6: "Geological" RSA.

- Chapter 7: "Turbo Egyptians."

- Chapter 8: Interlude: Randomism.

- Chapter 9: "Exodus from Egypt" with Comba's Algorithm.

- Chapter 10: Introducing Karatsuba's Multiplication.

- Chapter 11: Tuning and Unified API.

- Chapter 12A: Karatsuba Redux. (Part 1 of 2)

- Chapter 12B: Karatsuba Redux. (Part 2 of 2)

- Chapter 13: "Width-Measure" and "Quiet Shifts."

- Chapter 14A: Barrett's Modular Reduction. (Part 1 of 2)

- Chapter 14A-Bis: Barrett's Modular Reduction. (Physical Bounds Proof.)

- Chapter 14B: Barrett's Modular Reduction. (Part 2 of 2.)

- Chapter 15: Greatest Common Divisor.

- Chapter 16A: The Miller-Rabin Test.

- Chapter 17: Introduction to Peh.

- Chapter 18A: Subroutines in Peh.

- Chapter 18B: "Cutouts" in Peh.

- Chapter 18C: Peh School: Generation of Cryptographic Primes.

- Chapter 19: Peh Tuning and Demo Tapes.

- Chapter 20: "Litmus", a Peh-Powered Verifier for GPG Signatures.

- Chapter 20B: Support for Selected Ancient Hash Algos in "Litmus."

- Chapter 20C: Support for 'Clearsigned' GPG texts in "Litmus."

- Chapter 20D: "Litmus" Errata: Support for Nested Clearsigned Texts.

- Chapter 21A: Extended GCD and Modular Multiplicative Inverse. (Part 1 of 3).

- Chapter 21A-Bis: Fix for Lethal Flaw in Ch.15's Greatest Common Divisor.

You will need:

- A Keccak-based VTron (for this and all subsequent chapters.)

- All of the materials from Chapters 1 - 20D.

- ffa_ch21a_bis_fix_ch15_gcd.kv.vpatch

- ffa_ch21a_bis_fix_ch15_gcd.kv.vpatch.asciilifeform.sig

Add the above vpatches and seals to your V-set, and press to ffa_ch21a_bis_fix_ch15_gcd.kv.vpatch.

You should end up with the same directory structure as previously.

As of Chapter 21A-Bis, the versions of Peh and FFA are 250 and 200, respectively.

Now compile Peh:

cd ffacalc gprbuild |

But do not run it quite yet.

1. The Boojum.

This Chapter repairs a lethal bug in Chapter 15's "Constant-Time Iterative GCD" algorithm and its implementation.

While working on Ch. 21B, I found that the algorithm used in Ch. 15 is subtly broken on a very small but readily-constructible subset of its input domain.

My original (unpublished -- it was the nominal answer to Ch. 15 Exercise 2...) proof for this algo was fallacious: the calculation as formulated there is superficially correct, but it is not guaranteed to converge in Bitness(A) + Bitness(B) iterations! It is possible to construct pairs of inputs where we end up with an incorrect GCD result, and we will discuss several examples of these in this Chapter.

Cut corners are to be paid for, and I will admit that I would rather pay for this one now, with a Vpatch, a new proof, and an apology to readers, than later -- when I put FFA to use in anger, and offer BTC bounties for reporting defects.

Several supposedly-attentive FFA students at various times reported that they have eaten Ch. 15; and at least one of last year's readers even signed it. However, no one reported the defect, which I ended up uncovering while testing the final version of the Ch. 21 Extended-GCD against Ch. 15's conventional one. The breakage is triggered by a small subset of the possible input space where one or both input FZs to GCD consist almost entirely of ones (i.e. most of the bits are set.) No such case turned up in Ch. 15's randomly-generated test battery, reinforcing Dijkstra's famous observation that testing can reveal the presence of bugs, but never their absence.

Only proof can be relied on, and the proof had better be correct.

Let's proceed with two concrete examples of inputs which break the Ch. 15 GCD:

Ch. 15 GCD Counter-Example No. 1:

This Tape:

.FFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFBB .FFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFB G # |

... produces 4, when fed into a 256-bit-FZ run of Peh. But the two numbers are in fact co-prime, i.e. their actual GCD is 1.

Click here to see what happens when this Tape runs.

And this Tape:

Ch. 15 GCD Counter-Example No. 2:

.FFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFEB .FFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFB G # |

... produces 0. Which is not only not the correct answer (again 1; the given numbers are yet again co-prime) but is in fact an impossible output of any GCD invocation apart from GCD(0,0) -- and is true there only by convention.

Click here to see what happens when this Tape runs.

It is possible to generate further counter-examples, but these are quite enough to demonstrate that the Ch. 15 GCD algorithm does not work as specified.

Now let's review the broken algo:

Chapter 15 Algorithm 3: Broken Constant-Time Iterative GCD.

For FZ integers A and B:

- Twos := 0

- Iterate Bitness(A) + Bitness(B) times:

- Ae := 1 - (A AND 1)

- Be := 1 - (B AND 1)

- A := A >> Ae

- B := B >> Be

- Twos := Twos + (Ae AND Be)

- D := |A - B|, C ← Borrow

- B := {C=0 → B, C=1 → A}

- A := D

- A := A << Twos

- A contains the GCD.

To those who have read Ch. 21A, the defect may be already apparent.

Specifically, when we introduce the Iterate Bitness(A) + Bitness(B)... condition in place of the original non-constant-time algo's Iterate until B = 0..., steps 8...10 become erroneous, in two respects.

First: in a run which requires exactly Bitness(A) + Bitness(B) - 1 iterations to reach the point where A = B, A will end up equal to zero, while the GCD ends up permanently trapped in B.

The root of the problem is that D := |A - B| becomes the new value of A even when D = 0 (i.e. A and B were equal.) For virtually the whole input space of the algo, this state is temporary: the final GCD will move into A in the very next iteration, and stays there. But if there is no remaining iteration, we end up with the invalid output 0. This is illustrated by Ch. 15 GCD Counter-Example No. 2.

Second: the logic of steps 8...10 permits a condition where one or more rounds of the iteration execute without reducing the bitness of either A or B. Enough of these, and the algorithm in fact will not converge. This is illustrated by Ch. 15 GCD Counter-Example No. 1.

This time, the problem is that the subtractive step is performed without demanding (as we did starting with Algorithm 2 of Ch. 21A) that both A and B be odd. This can result in an iteration where we in fact get no closer to convergence than we were at the preceding one.

To understand exactly how the Ch.15 algo is subtly broken, let's pretend that we had a 8-bit FZ (for didactic purposes strictly; the minimal FZ bitness in FFA is 256); and then construct and illustrate a simple example, where two 8-bit quantities -- which, theoretically, would be expected to converge to their correct GCD (1) in 16 iterations -- fail to do so.

For clarity, this and all subsequent worked examples will show binary representations of A and B.

Sad_Ch15_GCD(0xfb, 0xdb) :

| i | Ai | Bi | AEven : | BEven : | Twos | A ← |A - B| | B ←?← A | Ai+1 | Bi+1 |

| A ← A/2 | B ← B/2 | ||||||||

| 1 | 11111011 | 11011011 | 0 | 100000 | 11011011 | 100000 | 11011011 | ||

| 2 | 100000 | 11011011 | 10000 | 0 | 11001011 | 10000 | 11001011 | 10000 | |

| 3 | 11001011 | 10000 | 1000 | 0 | 11000011 | 1000 | 11000011 | 1000 | |

| 4 | 11000011 | 1000 | 100 | 0 | 10111111 | 100 | 10111111 | 100 | |

| 5 | 10111111 | 100 | 10 | 0 | 10111101 | 10 | 10111101 | 10 | |

| 6 | 10111101 | 10 | 1 | 0 | 10111100 | 1 | 10111100 | 1 | |

| 7 | 10111100 | 1 | 1011110 | 0 | 1011101 | 1 | 1011101 | 1 | |

| 8 | 1011101 | 1 | 0 | 1011100 | 1 | 1011100 | 1 | ||

| 9 | 1011100 | 1 | 101110 | 0 | 101101 | 1 | 101101 | 1 | |

| 10 | 101101 | 1 | 0 | 101100 | 1 | 101100 | 1 | ||

| 11 | 101100 | 1 | 10110 | 0 | 10101 | 1 | 10101 | 1 | |

| 12 | 10101 | 1 | 0 | 10100 | 1 | 10100 | 1 | ||

| 13 | 10100 | 1 | 1010 | 0 | 1001 | 1 | 1001 | 1 | |

| 14 | 1001 | 1 | 0 | 1000 | 1 | 1000 | 1 | ||

| 15 | 1000 | 1 | 100 | 0 | 11 | 1 | 11 | 1 | |

| 16 | 11 | 1 | 0 | 10 | 1 | 10 | 1 | ||

| 17 | 10 | 1 | 1 | 0 | 0 | 1 | 0 | 1 | |

| 18 | 0 | 1 | 0 | 0 | 1 | 0 | 1 | 0 | |

| GCD (Incorrect) | 10 | ||||||||

The iterations marked in red, would have been necessary for successful convergence, but never execute; those marked in yellow, do not reduce the bitness of A or B.

The mine is in the fact that, if, at step 8 of the algo:

- D := |A - B|, C ← Borrow

- B := {C=0 → B, C=1 → A}

- A := D

... it so happens that A is even and B is odd, then D (and consequently the value of A in the next iteration) will be odd. And, if A ≥ B, then B will remain odd in the next iteration; and we will get an iteration cycle where the total bitness of A and B will not decrease, unless A and B happen to be close enough in value for the subtraction in step 8 to decrease it.

Thus we have seen how to construct a condition where carrying out an iteration of Ch. 15's GCD does not reduce the bitness of either A or B.

The interesting bit is that in virtually all possible inputs to GCD, this flaw does not lead to an ultimately incorrect output, because -- given sufficient iterations -- the correct answer is obtained. But in a very small number of possible input pairs, convergence will not be reached inside 2 × FZ_Bitness iterations.

It appears to be virtually impossible to arrive at the fatal condition by chance.

This kind of thing could be an ideal cryptological boobytrap, if GCD were in fact a key element in any known cryptosystem.

But AFAIK, there is no such cryptosystem. On top of this, from a cryptological point of view, the broken GCD "fails safe", i.e. it can be coaxed into reporting two co-prime inputs as being non-coprime, but not the opposite.

And, fortunately (or unfortunately, from the POV of quickly turning up possible defects) FFA does not currently use conventional-GCD inside any other internal algorithm. But let's consider where we have thus far made use of GCD.

Apart from the original Ch.15 tests, the only two places in the series where we have used GCD were the primorial generator demo depicted in Ch. 18C and the prime generator demo which used it.

The primorial generator was unaffected -- it apparently produces correct primorials for all valid FZ Widths from 256 to -- at least -- 16384.

The prime generator was also unaffected. In principle, a defective GCD would result, there, in a... slightly slower prime generator, which would attempt a larger number of doomed Miller-Rabin tests; GCD was used there strictly as speedup pre-filter for the intake candidates. But there was no detectable decrease in the number of M-R-failed runs after I put the corrected GCD into service.

The Ch. 21A material, interestingly, is also unaffected: the initially non-constant-time algo from the middle of Ch. 15 is given there as a starting point. And that algo (and all of the subsequent extensions offered) was... correct. Only the constant-time version which was used to actually write the GCD routine in Ch. 15, was not...

The bullet, it could be said, went through the hat, but not the head. Nevertheless, it is a serious defect, and will be corrected in this Chapter. And this time, the full proof of convergence will be given.

2. The Cure.

Let's rewrite the GCD algo, so that a reduction of the bitness of A, B, or both, is in fact guaranteed at every iteration.

First, we'll write a readability-optimized schematic version (expressed with branching logic) of the correct algorithm :

Chapter 21A-Bis Algorithm 1: Schematic Version of Corrected Constant-Time Iterative GCD :

For FZ integers A and B:

- Twos := 0

- Iterate Bitness(A) + Bitness(B) - 1 times:

- D := |A - B|

- If Odd(A) and Odd(B) :

- If A < B :

- B := A

- A := D

- If Even(A) and Even(B) :

- Twos := Twos + 1

- If Even(A):

- A := A / 2

- If Even(B):

- B := B / 2

- A := Bitwise-OR(A, B)

- A := A * 2Twos

- A contains the GCD.

Second, and equivalently, a properly constant-time formulation of the above :

Chapter 21A-Bis Algorithm 2: Corrected Constant-Time Iterative GCD :

For FZ integers A and B:

- Twos := 0

- Iterate Bitness(A) + Bitness(B) - 1 times:

- OO := (A AND 1) AND (B AND 1)

- D := |A - B|, C ← Borrow

- B := {(OO AND C)=0 → B, (OO AND C)=1 → A}

- A := {OO=0 → A, OO=1 → D}

- Ae := 1 - (A AND 1)

- Be := 1 - (B AND 1)

- A := A >> Ae

- B := B >> Be

- Twos := Twos + (Ae AND Be)

- A := A OR B

- A := A << Twos

- A contains the GCD.

Now, let's show, step by step, that Algorithm 1 and Algorithm 2 are arithmetically-equivalent.

| Algorithm 1 Operation | Description | Algorithm 2 Operation |

|

Initialize the common-power-of-two counter to 0. |

|

|

Begin a loop with exactly Bitness(A) + Bitness(B) - 1 iterations. In FFA, this is definitionally equivalent to 2×FZ_Bitness(N) - 1, where N is any of the inputs A, B or the output GCD. |

|

| Start of Iteration | ||

|

Compute the absolute value of the difference A - B. In the constant-time Algorithm 2, we save the carry bit to C, which will trigger subsequent operations predicated on the condition A < B. |

|

|

Determine whether both A and B are presently odd. |

|

|

Assign A to B if A and B were both odd, and A < B. |

|

|

Assign D to A if A and B were both odd. |

|

|

If both A and B are presently even, increment the common power-of-two counter. |

|

|

If A was found to be even, divide it by two. In Algorithm 2, this is accomplished via a Right Shift by 1. |

|

|

If B was found to be even, divide it by two. In Algorithm 2, this is accomplished via a Right Shift by 1. |

|

| End of Iteration | ||

|

Normally, B will contain the intermediate result after convergence. However, when calculating GCD(N, 0), where N > 0, it will be found in A. The other variable will always equal 0. Hence, we obtain the final result by performing a bitwise-OR of A, B. It is assigned to A. |

|

|

Reintroduce the common-power-of-two factor into the intermediate GCD result. If there was none (i.e. one or both inputs were odd to begin with) this will be 20, i.e. 1, and then has no effect. In Algorithm 2, the multiplication is accomplished via a constant-time Left Shift. |

|

|

A now contains the actual GCD of the two input integers. |

|

| GCD is in A. | ||

If you are entirely satisfied that Algorithm 1 and Algorithm 2 are equivalent in their effects, proceed to the proof below. For clarity, we will base it on Algorithm 1.

First, let's demonstrate that each iteration of the loop preserves the GCD of A, B :

| Algorithm 1 Operation | Identity |

|

A restatement of Euclid's original : GCD(A, B) = GCD(|A - B|, Min(A, B))

Observe that |A - B|, the new value of A, is necessarily even, while |

|

A common factor of 2 will be removed (in steps 11 and 13) from A and B, respectively, and the Twos counter is incremented, so we can multiply 2Twos back in at step 15. GCD(2 × K, 2 × L) = 2 × GCD(K, L) |

|

A factor of 2 is removed from A. GCD(2 × K, B) = GCD(K, B) |

|

A factor of 2 is removed from B. GCD(A, 2 × L) = GCD(A, L) |

Now, let's confirm that Algorithm 1 is in fact guaranteed to converge within at most Bitness(A) + Bitness(B) - 1 iterations. Let's exhaustively describe the effects of an iteration across the four possible combinations of parities of A and B. (Steps 8 and 9 do not affect the bitness of A or B and will be omitted.)

| A is Odd, B is Odd : | ||

| Algorithm 1 Operation | Effect on Bitness of A | Effect on Bitness of B |

|

No guaranteed effect (but may decrease by 1 or more bits). However, A is now necessarily even. | No guaranteed effect (but may decrease by 1 or more bits). B remains odd. |

|

As A is guaranteed to have become even in step 7: decreases by 1 bit. | None |

|

None | None |

| Net Effect on Bitnesses: | Decreased by at least 1 bit. May become zero if A and B had been equal. | None; may decrease by 1 or more bits. |

Note that in the convergence case where A=1 B=1, the above parity combination will yield A=0 B=1.

| A is Odd, B is Even : | ||

| Algorithm 1 Operation | Effect on Bitness of A | Effect on Bitness of B |

|

None | None |

|

None | None |

|

None | Decreases by 1 bit, unless B = 0. |

| Net Effect on Bitnesses: | None | Decreased by 1 bit, unless B = 0. |

| A is Even, B is Odd : | ||

| Algorithm 1 Operation | Effect on Bitness of A | Effect on Bitness of B |

|

None | None |

|

Decreases by 1 bit, unless A = 0. | None |

|

None | None |

| Net Effect on Bitnesses: | Decreased by 1 bit, unless A = 0. | None |

| A is Even, B is Even : | ||

| Algorithm 1 Operation | Effect on Bitness of A | Effect on Bitness of B |

|

None | None |

|

Decreases by 1 bit, unless A = 0. | None |

|

None | Decreases by 1 bit, unless B = 0. |

| Net Effect on Bitnesses: | Decreased by 1 bit, unless A = 0. | Decreased by 1 bit, unless B = 0. |

We have shown that each iteration of Algorithm 1 (and, as we have demonstrated their equivalence -- Algorithm 2) is guaranteed to reduce the bitness of A, B, or of both A and B, by at least 1 bit -- supposing we have not yet reached convergence (i.e. when nothing can be reduced because one of A or B is equal to zero, while the other is odd.)

Therefore, to compute the GCD of A, B where each is of bitness W, we in fact need at most (2 × W) - 1 iterations (i.e. to arrive at a GCD of 1, which is of bitness 1.)

Now, let's illustrate two concrete symmetric cases where the maximum permitted number of iterations is in fact required. Each of these pairs of 8-bit inputs demands 15 (i.e. ( 2 × 8 ) - 1) shots for convergence.

GCD(0x80, 0xff) :

| i | Ai | Bi | AOdd and BOdd : | AEven : | BEven : | Twos | Ai+1 | Bi+1 | |

| A ← |A - B| | B ← A | A ← A/2 | B ← B/2 | ||||||

| 1 | 10000000 | 11111111 | 1000000 | 0 | 1000000 | 11111111 | |||

| 2 | 1000000 | 11111111 | 100000 | 0 | 100000 | 11111111 | |||

| 3 | 100000 | 11111111 | 10000 | 0 | 10000 | 11111111 | |||

| 4 | 10000 | 11111111 | 1000 | 0 | 1000 | 11111111 | |||

| 5 | 1000 | 11111111 | 100 | 0 | 100 | 11111111 | |||

| 6 | 100 | 11111111 | 10 | 0 | 10 | 11111111 | |||

| 7 | 10 | 11111111 | 1 | 0 | 1 | 11111111 | |||

| 8 | 1 | 11111111 | 11111110 | 1 | 1111111 | 0 | 1111111 | 1 | |

| 9 | 1111111 | 1 | 1111110 | 1 | 111111 | 0 | 111111 | 1 | |

| 10 | 111111 | 1 | 111110 | 1 | 11111 | 0 | 11111 | 1 | |

| 11 | 11111 | 1 | 11110 | 1 | 1111 | 0 | 1111 | 1 | |

| 12 | 1111 | 1 | 1110 | 1 | 111 | 0 | 111 | 1 | |

| 13 | 111 | 1 | 110 | 1 | 11 | 0 | 11 | 1 | |

| 14 | 11 | 1 | 10 | 1 | 1 | 0 | 1 | 1 | |

| 15 | 1 | 1 | 0 | 1 | 0 | 0 | 0 | 1 | |

| GCD | 1 | ||||||||

GCD(0xff, 0x80) :

| i | Ai | Bi | AOdd and BOdd : | AEven : | BEven : | Twos | Ai+1 | Bi+1 | |

| A ← |A - B| | B ← A | A ← A/2 | B ← B/2 | ||||||

| 1 | 11111111 | 10000000 | 1000000 | 0 | 11111111 | 1000000 | |||

| 2 | 11111111 | 1000000 | 100000 | 0 | 11111111 | 100000 | |||

| 3 | 11111111 | 100000 | 10000 | 0 | 11111111 | 10000 | |||

| 4 | 11111111 | 10000 | 1000 | 0 | 11111111 | 1000 | |||

| 5 | 11111111 | 1000 | 100 | 0 | 11111111 | 100 | |||

| 6 | 11111111 | 100 | 10 | 0 | 11111111 | 10 | |||

| 7 | 11111111 | 10 | 1 | 0 | 11111111 | 1 | |||

| 8 | 11111111 | 1 | 11111110 | 1 | 1111111 | 0 | 1111111 | 1 | |

| 9 | 1111111 | 1 | 1111110 | 1 | 111111 | 0 | 111111 | 1 | |

| 10 | 111111 | 1 | 111110 | 1 | 11111 | 0 | 11111 | 1 | |

| 11 | 11111 | 1 | 11110 | 1 | 1111 | 0 | 1111 | 1 | |

| 12 | 1111 | 1 | 1110 | 1 | 111 | 0 | 111 | 1 | |

| 13 | 111 | 1 | 110 | 1 | 11 | 0 | 11 | 1 | |

| 14 | 11 | 1 | 10 | 1 | 1 | 0 | 1 | 1 | |

| 15 | 1 | 1 | 0 | 1 | 0 | 0 | 0 | 1 | |

| GCD | 1 | ||||||||

In both of these examples, Bitness(A)+Bitness(B) is reduced by exactly one in each iteration. And we have already demonstrated that it is impossible to construct a case -- aside from the convergence case -- where an iteration will reduce this quantity by 0. So in fact these are instances of the "worst case" number of required iterations. (Recall that we are writing a constant-time algo, and the "worst case" number of iterations will always execute.)

Now, let's illustrate what happens in the "degenerate" cases. Starting with GCD(0,0) :

GCD(0, 0) :

| i | Ai | Bi | AOdd and BOdd : | AEven : | BEven : | Twos | Ai+1 | Bi+1 | |

| A ← |A - B| | B ← A | A ← A/2 | B ← B/2 | ||||||

| 1 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | ||

| 2 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | ||

| 3 | 0 | 0 | 0 | 0 | 3 | 0 | 0 | ||

| ... | 0 | 0 | 0 | 0 | ... | 0 | 0 | ||

| iLast | 0 | 0 | 0 | 0 | i | 0 | 0 | ||

| GCD | 0 | ||||||||

For illustrative purposes, three iterations are shown. But the end result, without regard to FZ Width, will always be the same: 0. The Twos counter increases with each additional iteration, as both A and B remain even, but this has no effect on the final output.

Now, let's examine the case GCD(0, N) where N > 0 :

GCD(0, 3) :

| i | Ai | Bi | AOdd and BOdd : | AEven : | BEven : | Twos | Ai+1 | Bi+1 | |

| A ← |A - B| | B ← A | A ← A/2 | B ← B/2 | ||||||

| 1 | 0 | 11 | 0 | 0 | 0 | 11 | |||

| 2 | 0 | 11 | 0 | 0 | 0 | 11 | |||

| 3 | 0 | 11 | 0 | 0 | 0 | 11 | |||

| ... | 0 | 11 | 0 | 0 | 0 | 11 | |||

| iLast | 0 | 11 | 0 | 0 | 0 | 11 | |||

| GCD | 11 | ||||||||

It should be clear at this point that once A has become equal to 0, and B is odd, further iterations have no effect.

Lastly, let's illustrate the "interesting", from the POV of the new algo, degenerate case: GCD(N, 0) where N > 0 :

GCD(3, 0) :

| i | Ai | Bi | AOdd and BOdd : | AEven : | BEven : | Twos | Ai+1 | Bi+1 | |

| A ← |A - B| | B ← A | A ← A/2 | B ← B/2 | ||||||

| 1 | 11 | 0 | 0 | 0 | 11 | 0 | |||

| 2 | 11 | 0 | 0 | 0 | 11 | 0 | |||

| 3 | 11 | 0 | 0 | 0 | 11 | 0 | |||

| ... | 11 | 0 | 0 | 0 | 11 | 0 | |||

| iLast | 11 | 0 | 0 | 0 | 11 | 0 | |||

| GCD | 11 | ||||||||

In this and only this case, the intermediate result will wind up in A rather than in B, given as the subtractive step demands an odd A and odd B, and this never happens in the (N, 0) case.

This is why we need step 14: A := Bitwise-OR(A, B).

Let's conclude with a second arbitrary example of the above :

GCD(0x80, 0x0) :

| i | Ai | Bi | AOdd and BOdd : | AEven : | BEven : | Twos | Ai+1 | Bi+1 | |

| A ← |A - B| | B ← A | A ← A/2 | B ← B/2 | ||||||

| 1 | 10000000 | 0 | 1000000 | 0 | 1 | 1000000 | 0 | ||

| 2 | 1000000 | 0 | 100000 | 0 | 2 | 100000 | 0 | ||

| 3 | 100000 | 0 | 10000 | 0 | 3 | 10000 | 0 | ||

| 4 | 10000 | 0 | 1000 | 0 | 4 | 1000 | 0 | ||

| 5 | 1000 | 0 | 100 | 0 | 5 | 100 | 0 | ||

| 6 | 100 | 0 | 10 | 0 | 6 | 10 | 0 | ||

| 7 | 10 | 0 | 1 | 0 | 7 | 1 | 0 | ||

| 8 | 1 | 0 | 0 | 7 | 1 | 0 | |||

| ... | 1 | 0 | 0 | 7 | 1 | 0 | |||

| iLast | 1 | 0 | 0 | 7 | 1 | 0 | |||

| GCD | 10000000 | ||||||||

At this point, there is not much else left to say about the algorithm per se.

And now, let's see the implementation of the new GCD:

package body FZ_GCD is -- Find Greatest Common Divisor (GCD) of X and Y. -- Note that by convention, GCD(0, 0) = 0. procedure FZ_Greatest_Common_Divisor(X : in FZ; Y : in FZ; Result : out FZ) is -- Widths of X, Y, and Result are equal subtype Width is Word_Index range X'Range; -- Working buffers for GCD computation, initially equal to the inputs A : FZ(Width) := X; B : FZ(Width) := Y; -- Evenness (negation of lowest bit) of A and B respectively Ae, Be : WBool; -- Common power-of-2 factor: incremented when Ae and Be are both 1 Twos : Word := 0; -- This flag is set when A and B are BOTH ODD OO : WBool; -- |A - B| D : FZ(Width); -- This flag is set iff A < B A_lt_B : WBool; begin -- To converge, requires number of shots equal to (2 * FZ_Bitness) - 1: for i in 1 .. (2 * FZ_Bitness(X)) - 1 loop -- Whether A and B are currently BOTH ODD : OO := FZ_OddP(A) and FZ_OddP(B); -- D := |A - B| FZ_Sub_Abs(X => A, Y => B, Difference => D, Underflow => A_lt_B); -- IFF A,B both ODD, and A < B : B' := A ; otherwise no change : FZ_Mux(X => B, Y => A, Result => B, Sel => OO and A_lt_B); -- IFF A,B both ODD: A' := |A - B| ; otherwise no change : FZ_Mux(X => A, Y => D, Result => A, Sel => OO); -- If A is now EVEN: A := A >> 1; otherwise no change Ae := 1 - FZ_OddP(A); FZ_ShiftRight(A, A, WBit_Index(Ae)); -- If B is now EVEN: B := B >> 1; otherwise no change Be := 1 - FZ_OddP(B); FZ_ShiftRight(B, B, WBit_Index(Be)); -- If both A and B were even, increment the common power-of-two Twos := Twos + (Ae and Be); end loop; -- Normally, B will contain the GCD, but in the (N,0) N > 0 case -- A. -- The other variable will always equal 0. Hence, take Bitwise-OR(A,B): FZ_Or(X => A, Y => B, Result => A); -- Reintroduce the common power-of-2 factor stored in 'Twos' FZ_Quiet_ShiftLeft(N => A, ShiftedN => A, Count => Indices(Twos)); -- Output final result -- the GCD. Result := A; end FZ_Greatest_Common_Divisor; end FZ_GCD; |

It is very slightly more expensive than the Ch.15 routine: by one MUX (in the loop) and one FZ_Or (outside of the loop.) But this is not a tragedy. Overall it comes out to cost just about the same, after taking into account the reduced (by one) number of iterations.

Unsurprisingly, this corrected variant of GCD passes the Ch.15 test battery; properly swallows both of the Ch.15 counter-examples given earlier; and, of course, the...

Ch. 21A-Bis GCD "Worst Case" Tape for 256-bit FZ :

.8000000000000000000000000000000000000000000000000000000000000000 .FFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFF G # |

... correctly produces the output 1, converging -- as predicted -- in the 511th and final iteration.

To generate this test for any FZ Width, all you need is:

Ch. 21A-Bis GCD "Worst Case" Tape for arbitrary FZ Width :

.1 .0~W.1- LS .0~ G # |

~ The next Chapter, 21B, will continue the Extended-GCD sequence of Chapter 21A. ~